Can you face a defamation lawsuit for what your AI did?

PLUS AI laws are shaping up in Tanzania

What’s new at Develop AI?

We are building an AI course for commercial and civil lawyers so they can use AI responsibly and ethically. And we have created a second course on the law and AI for everyone else, so you can navigate using AI in your business and avoid getting sued. If this stuff is starting to keep you up at night then get in touch (email me directly on paul@developai.co.za).

Can you face a defamation lawsuit for what your AI did?

I often reminisce about the early days of Facebook (like the old GenX/Millennial I am) when you could come to the end of your Newsfeed. You would turn on your computer at work and check out your feed (which was mostly single lines of text where your “friends” explained their mood in what they believed to be a pithy way) until it told you that you were caught up. Then you would close Facebook with a smile on your face and probably go talk to someone in the real world.

In 2026 feeds never end and there is no scarcity of text to read. In a world where you can create content instantly with AI, the value of communication feels like it is being shattered. And there is greater pressure to produce more content faster. And yet behind this desperation there is still a murky legal framework that is catching up.

Sometimes I completely forget that the law exists on the Internet. It has always been flimsy, but we now work on AI machines that are built on illegal practice every day. So, let’s see what are the chances of you taking on a tech company if a chatbot defames you (and what if you produce something with AI that defames someone else)?

What happens when a chatbot defames you?

We need to test the law, but few have the financial means to do this. Brian Hood previously worked for Note Printing Australia (a subsidiary of the Reserve Bank of Australia) in the early 2000s, when he alerted authorities to officers at his company paying bribes to overseas agencies to win contracts to print banknotes. He was a whistleblower. Unfortunately ChatGPT (in the early days of the app in March 2023) falsely named him as a guilty party in the scandal, claiming he had served prison time for bribery. He told Euronews he was “really shocked”. He said the mix of accurate and false information in the same output made it particularly dangerous. We are now, three years later, used to AI being like this. But even though Hood’s legal team sent a letter of concern to OpenAI, he eventually dropped the case because he didn’t have the cash to fight it. OpenAI tweaked the updated version of ChatGPT to correct Hood’s role and changed the older version to return an error message when asked about him. And without big cash flush individuals who can challenge this stuff it will keep happening without consequences.

Robby Starbuck, an American activist and filmmaker, sued Meta after its chatbot falsely linked him to the US January 6 Capitol riot in 2021 (and it said he was a Holocaust denier and a host of other things). His lawyers sent letters to get it rectified. It didn’t help so on April the 29th 2025 they filed in Delaware Superior Court, seeking $5 million. One day after filing, Meta's chief global affairs officer publicly apologised on X and four months later the case settled… with none of that 5 million paid, but instead, Starbuck became a Meta consultant working on reducing political bias and hallucinations in their AI models. That is like when Kramer tried to sue a coffee company for millions and settled for a lifetime of free lattes.

Even clear-cut instances of AI defamation can die for want of funding, with platforms quietly fixing errors rather than facing real accountability. There was a great article by Dario Milo and Lia Wheeler last month in South Africa’s Business Day which went into the topic of defamation in the age of AI. They say that disclaimers have given tech companies significant legal cover in some courts, though that defence doesn't hold everywhere… and won’t stop the cases coming forever.

What happens when I use AI to produce content that defames someone else?

The Dave Fanning case is actually the perfect real-world answer to this question. In October 2023, a Hong Kong-based news outlet called BNN Breaking used a chatbot to paraphrase a trending story about an unnamed Irish broadcaster on trial for sexual misconduct. Because the real broadcaster was protected by a court injunction preventing naming, the AI filled the gap by attaching a photo of a “prominent Irish broadcaster” (which turned out to be Dave Fanning, the well-known DJ who helped kickstart U2). He had absolutely nothing to do with the case. The story was then promoted by MSN, a web portal owned by Microsoft, and was visible for hours on the default homepage for anyone in Ireland using Microsoft Edge. Fanning sued both BNN and Microsoft. His lawyers said he claimed the article defamed him and as a result, he suffered grave damage to his reputation. BNN no longer exists. Microsoft terminated its licensing agreement with BNN but has not commented on the defamation case. The case is still active in the Irish High Court.

BNN is the clearest example yet of a publisher (not an AI company) being sued for using AI to produce defamatory content (and BNN is now defunct partly as a result), though its arguable that Fanning only made a move because Microsoft was also on the hook.

AI Legal Tracker

Develop AI is tracking global AI regulation and legal cases globally.

The enforcement clock for Tanzanians just ran out… literally yesterday. In Tanzania the Personal Data Protection Act (PDPA) is not an AI-specific law, but because AI systems rely on massive amounts of data to learn and make decisions it currently serves as the primary legal framework governing AI development in Tanzania. It means people need to get explicit consent before collecting or processing personal data, not share with third parties without consent and not take data out of the country without consent. This Act dictates the rules of the game for any AI model that uses personal information. A final warning was issued to public and private institutions to register for compliance, saying strict enforcement would begin today, the 9th of April.

This is a “good” law on paper, but the execution is wonky. The principles are solid: it gives citizens real legal recourse but Tanzania has a history of using digital laws against journalists and civil society and a vague data protection framework in the wrong hands can become a tool of surveillance rather than protection.

Where are we going?

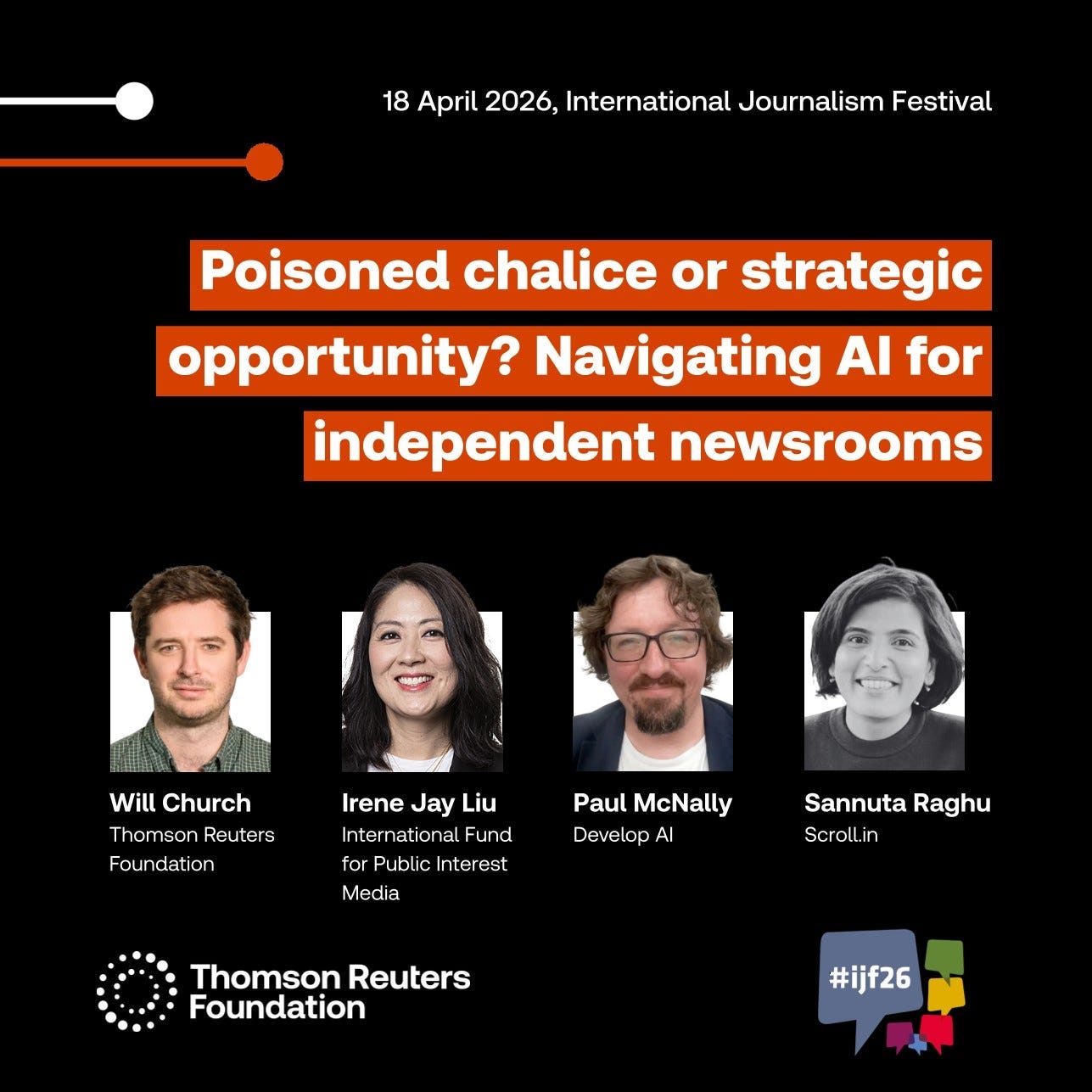

The International Journalism Festival in Perugia, Italy is next week. I will be talking on a panel about how independent newsrooms are navigating the use of AI. And later this year I will be talking at Trust Conference in London Town. For both invites I must extend a huge thank you to Thomson Reuters Foundation.

In Perugia I will also be part of a hands-on tool demo of the brand new MethodKit for Journalism and AI (which I helped create for DW Akademie). I’ll be joined by Michelle Nogales Cardozo. Muy Waso and Steffen Leidel.

See you next week. Cheers.

Develop Al is an AI consulting company that has trained and worked with 100s of organisations globally so they can effectively implement AI and develop ethical AI prototypes and polices.

Contact Develop AI to arrange an AI training (online and in person) for you and your team. And ask about our mentoring so your business can build efficient AI workflows.

We have implemented AI strategies for Thomson Reuters Foundation, DW Akademie, Public Media Alliance, IMS (International Media Support), Agence Française de Développement and others to improve the impact of AI globally.

Email me directly on paul@developai.co.za, find me on LinkedIn or chat to me on our WhatsApp Community.